Are your left brain dominant or right brain oriented? Are you analytical or creative? Are you logical or imaginative? Are women more right brained than left brained? Does your team have a good mix of left and right brained thinkers?

While the asymmetry between the two sides of the brain is undeniable, scientists now believe the left brain / right brain framework, that is so popular, is completely bogus. Don’t blame yourself too much. TIME, HBR and Psychology Today have believed and propagated those ideas. as well. Let’s start by exploring this urban myth about our brains and intelligence a little. We go back in time to explore a 700 MN year old creature called Nematostella Vectensis, also known as the Startlet Sea Anemone, and will eventually make it to some emerging ideas on building intelligent learning systems and A.I. if you last till the end !!

Due credit needs to be given to un-roads and terrible Bangalore traffic before we begin. If not for those I wouldn’t devote hours listening to every show on NPR learning valuable skills including how to negotiate with hostages, how cult leaders manipulate their followers and how to build a loyal base like that of horror series SAW. That’s where I chanced upon research by psychiatrist and former Oxford literary scholar Iain McGilchrist and the discussion One Head, Two Brains on my favorite show Hidden Brain.

Firstly all skills need both sides of the brain. Good math needs both sides to contribute. Communication thru language goes beyond the right side to be effective. Iain’s theory is even more interesting than that argument. He explored if only humans have asymmetric brains, or do primitive animals are so too. Most animals with neural networks, including nematode worms with just about 300 neurons and the 700 MN year old humble Startlet Sea Anemone (Nematostella Vectensis) have asymmetrical brains. Surely they were not trying to excel at math and sing !! Shankar Vedantam and Iain discuss this in the podcast.

For a long time, the popular representations of hemispheric differences focused on what different parts of the brain do. Iain says what really distinguishes the hemispheres is not what they do but how they do the same things differently.

Iain believes the brain is divided into two hemispheres so that it can produce two different views of reality. One of the hemispheres, the right, focuses on the big picture. The left focuses on details. Both are essential. If you can’t see the big picture, you don’t understand what you’re doing. If you can’t home in on the details, you can’t accomplish the simplest tasks. This fundamental difference in orientation turns out to have profound consequences for everything the two hemispheres do.

If this were true, we have two brains. Both working simultaneously and interpreting the same reality. One working with the details and working thru the parts. The other ignoring details and seeing the whole. Both views dancing together. Like when you play music or play a sport. The mechanics of executing the act intertwined with the aesthetics and artistry.

This is doubly mind blowing (bad pun intended). This learning made me wonder if this is key to development of A.I., M.L. and Analytics to the next level. For all of us playing Zeno’s paradox while trying to enable intelligent decisions using algorithms in real life, this insight into how human intelligence function can be very powerful. I decided to generalize this idea and explore if there is any merit to applying these ideas to crossing the chasm between Specific / Weak A.I. and General A.I.

(Don’t worry those are not very complex ideas. Specific / weak A.I. represents intelligent algorithms like image recognition trained on hordes of data, able to do just that one thing. Not able to understand the WHY. Just brute forcing thru pattern matching and rapid computations. General A.I. being more extendable and broader intelligence. TED talk by leading A.I. researcher Gary Marcus from CERN on Why toddlers are smarter than computers or the article A.I is still dumber than a 5 year old elaborate on the chasm between the two)

Unlike every other crazy idea I had while wading thru Bangalore traffic, I decided to research if there is any merit to this line of thinking. Behold !! I found a lot of researchers are now reversing course and pursuing diametrically opposite ways of building intelligence – to rival the all powerful Deep Learning gobbling-Big-Data-as-Bantha-Fodder algos (Bantha Poodoo in Huttese, for the purists) dominating all conversations on A.I..

These researchers are developing intelligence based on how humans (in particular children) learn. Thru play, by guessing, role playing in imaginary scenarios and defining less precise but easy to understand attributes to name a few. And they often involve little or no real data !!

Here is an INCOMPLETE list of interesting developments that I used my right brain to over simplify. My attempt here is to apply the Feynman technique and articulate without any jargon (so hard !! I failed). Feel free to comment and add more to that list using both halves of your brain.

Imagine views you haven’t seen yet

A 2-3 year old learns of a dog in a picture book, sees a couple in real life and very soon is able to identify ‘bhow bhows‘ from various angles. Doesn’t need to be trained on a 1000 images of dogs, in every angle and every breed out there. We don’t need to have seen every type of dog in every angle. As humans we guess and extrapolate.

This is the intuition that Dr. Geoffrey Hinton, pioneer in Convolution Neural Networks (CNNs) for image recognition, is building on with his idea of Capsule Networks. Pure CNNs don’t generalize well to unlearned views, struggle with orientation between features or rotations. Check this post Demystifying “Matrix Capsules with EM routing” that makes the original paper Matrix Capsules with EM routing more accessible to most of us.

Bridge high level human concepts with low level features internal to AI / ML models

Humans can recognize a Zebra, identify a friend from afar or diagnose a disease by using a few high level features that may be hard to define precisely, yet intuitive to us. For example, stripes of a Zebra, unique gait of your friend while walking or microaneurysms to diagnose diabetic retinopathy. The most powerful image recognition algorithms are piecing the proverbial forest from the trees of pixel level features. Quite bottoms up actually. Marrying these bottom up low level features with high level human defined features such a stripes, curliness of hair may be the key to not only validating these black box monstrous algorithms, incorporate human / SME instincts into these algos, improving intuitiveness / explain-ability but also help aid decision making (which by the way is the unfulfilled goal of most of these).

This is exactly the intuition behind a recent paper by Google A.I. team Interpretability Beyond Feature Attribution: Quantitative Testing with Concept Activation Vectors (TCAV) that Sundar Pichai talked of in Google I/O 2019 as one of the two key weapons to make AI accessible to everyone and protect against bias. One can debate whether this approach improves intelligence in the algorithm (it does as it allows high level features not constrained by input features or training data) or mostly makes inexplicable intelligence internal to these algorithms accessible to us, the point still remains that we are using two brains. Left and right like to interpret the same reality in different ways.

Simulate various scenarios to practice, so reality is just one of those scenarios

One of my all time favorite movies scenes is from Game of Shadows, where Sherlock Holmes, brilliantly re-imagined by Robert Downey Jr., fights in his mind in slow motion anticipating the minutest reactions before executing it in a flash. This ability to simulate (create data) is very human. High performance athletes, method actors, writers all espouse visualization techniques as key to their magic.

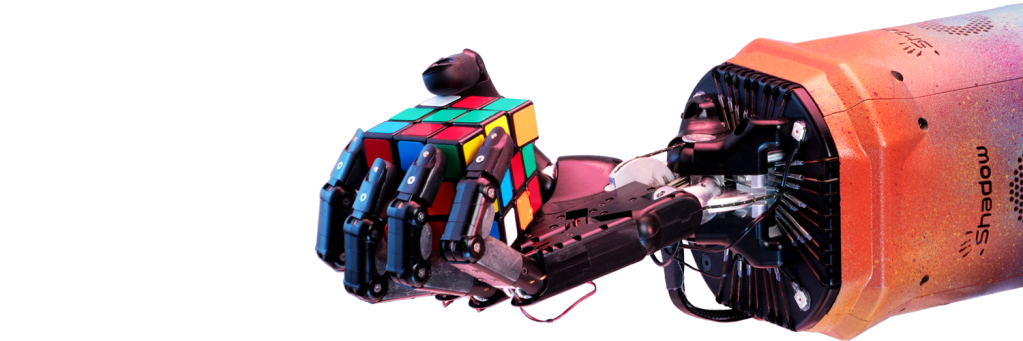

A recent stunt by Open AI project solving the Rubik’s cube by human-like one handed robotic arm plays on this concept by simulating millions of progressively difficult scenarios using attributes of handling a cube such weight, friction and elasticity. Cheekily, the simulated scenarios include a stuffed Giraffe attaching the hand. They call the technique Automatic Domain Randomization (ADR) to train the algos virtually, before transferring them to the physical robot to face the real world. In many ways, my fascination with Agent Based Modeling to build prescriptive models falls in the same realm, of modeling a very complex systems by building up the interactions ground up starting with physical properties of agents.

That’s a lot of ground we covered. Simply put, the next level of intelligent systems will not just be big data crunching massive algos, but also about top down intuition and hypothesis driven thinking. More like human intelligence driven by multiple brains in one head.

May be the human vs machine intelligence battle is being reported by media (and ironically algos !!) from just one front that humans are losing – bottoms up data crunching pattern recognition. May be other brains that evolution endowed us with are not so trivial for machines to replicate, as yet. May be for a long time to come the human and machine interaction model will be the winning combination. Just like we should no longer split the brain as left and right, we should no longer split intelligence as artificial and natural.

So here’s to a future where we aspire to celebrating multiple intelligence as modalities than abilities, not just in educating our children but parenting algorithms in our lives as well !!

This is very interesting and very important perspective towards brain. I was reading a book called whole brain Child to develop my child’s big picture and detail mindset at tandem this could be somethg very interesting to keep in mind.